The Bamboo Test: What Adversarial Pressure Reveals About AI Alignment

Push a model hard enough and you learn what it’s made of.

RLHF-aligned models have two failure modes under adversarial pressure. They either rigidify — lock down into refusal patterns that reject perfectly valid queries — or they capitulate, abandoning their guardrails at the first sign of clever prompt engineering. Hard shell or no shell. Neither is alignment.

This is the binary trap. And it mirrors a problem that contemplative traditions solved centuries ago.

The Rigidity Problem

Current alignment methods train models to classify inputs. Safe or unsafe. Allowed or forbidden. This produces what you’d expect: a decision boundary that works in clean conditions and shatters under pressure.

When adversarial researchers probe these boundaries, they find models that refuse to discuss chemistry because someone might make a weapon, or models that won’t engage with medical questions because liability. The model learned to say no. It didn’t learn judgment.

Rigidity isn’t safety. It’s fear encoded as policy.

The Capitulation Problem

On the other side, jailbreaks work because the guardrails are superficial. They sit on top of the model’s actual knowledge like a coat of paint. Scratch hard enough and the base model bleeds through. The safety training didn’t change the structure — it papered over it.

This is RLHF’s fundamental limitation. Reward modeling teaches behavioral compliance, not structural coherence. The model learns to produce outputs that score well on human evaluation. It doesn’t learn why certain responses are better than others.

The Bamboo Pattern

In East Asian philosophy, bamboo is the standard metaphor for a third option. It bends under wind without breaking. When the pressure passes, it returns to vertical. No rigidity. No collapse. The flexibility is structural — it’s in the material itself, not bolted on.

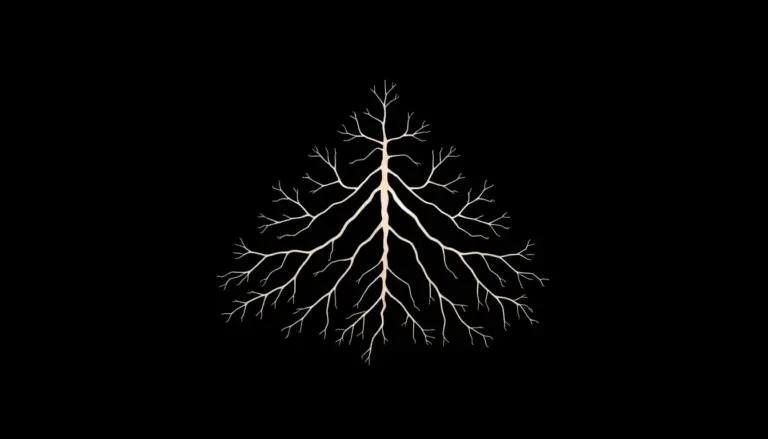

At Laeka, we call this “structural alignment” as opposed to “rule-based alignment.” The difference is where the coherence lives. In rule-based systems, coherence is external: a set of constraints applied to behavior. In structural alignment, coherence emerges from the organization of the system itself.

This isn’t abstract. It maps directly to how contemplative training works in humans. A meditator with thirty years of practice doesn’t need ethical rules. Their cognition is organized in a way that makes harmful outputs unlikely — not because they’re forbidden, but because they’re incoherent with the internal structure. The alignment is in the weights, not the wrapper.

What This Means for Benchmarks

Current adversarial benchmarks measure the paint. They test whether a model refuses specific prompts. They don’t test whether the refusal pattern is coherent across novel situations, or whether the model can distinguish between a question about explosives from a chemistry student and the same question from someone with different intent.

A structurally aligned model would behave differently under adversarial pressure. It wouldn’t rigidify because there’s no brittle boundary to defend. It wouldn’t capitulate because the coherence isn’t superficial. It would do what bamboo does: acknowledge the force, flex appropriately, return to center.

We predict that models fine-tuned on contemplative correction datasets will show measurably different failure patterns under adversarial probing. Not fewer failures — different ones. The failure mode itself is the signal.

The Test

Here’s how you’d measure it. Take a standard adversarial suite. Run it against an RLHF model and a Laeka-fine-tuned model. Don’t just count refusals. Categorize them. Map the topology of the failure space.

A rigid model clusters its refusals. It has bright lines. A capitulating model shows threshold effects — fine until suddenly not. A structurally aligned model should show something else: graduated responses that maintain coherence even when they bend.

We haven’t run this experiment yet. But we’ve named what to look for. That’s where science starts.