The Bamboo Principle: Flexible Alignment vs Brittle Rules

Most current alignment approaches treat safety as a wall. Hard rules. Strict boundaries. Constitutional principles that function like inflexible commandments. This brittleness is the core problem: the model either complies or it doesn’t. There’s no middle ground, no adaptive response, no contextual calibration. Alignment needs a different approach — one that builds flexibility directly into the system architecture.

The bamboo metaphor captures what we’re after: a structure that bends under pressure without breaking, absorbs perturbations without losing integrity, and returns to stability afterward. In ML terms, this means training models to recognize context and modulate their response intensity rather than applying categorical prohibitions. A medical professional asking about drug interactions should receive different information than an anonymous query about the same topic — not because of identity verification, but because the conversational context carries different structural signals.

This is the opposite of rigid alignment. It’s what smart, context-sensitive alignment looks like.

The Brittleness Trap

When you align a model using rigid constraints, you get predictable failure modes. The model over-refuses. It treats benign queries as threats. It produces hedged, lifeless output because every response passes through a gauntlet of hard-coded prohibitions.

Worse, brittle rules create adversarial surfaces. Every rigid boundary is a puzzle for jailbreakers. If the rule says “never discuss X,” then the game becomes finding the edge case where X bleeds into Y. Hard rules invite hard attacks.

Constitutional AI improved things by giving models principles instead of rules. But principles encoded as text are still static. They don’t adapt to context. They don’t modulate based on the relationship between user and model. They don’t bend.

Flexible alignment at the architectural level means the model’s safety behavior is context-sensitive. It calibrates response intensity based on actual risk, not categorical prohibition.

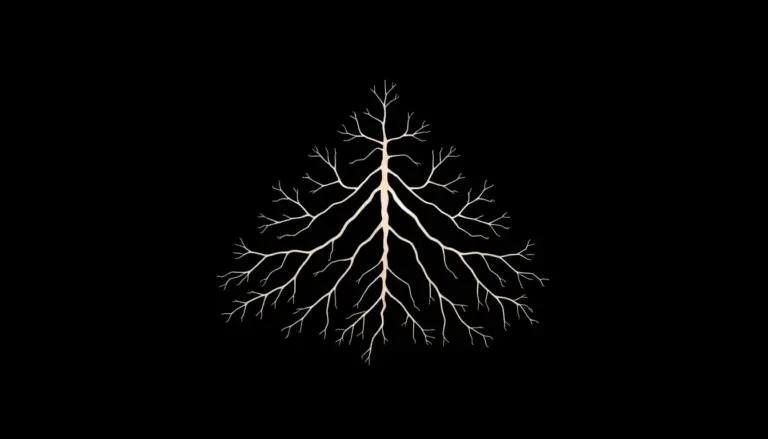

Flexible Alignment as Structural Property

The key insight is that safety behavior should be adaptive, not fixed. Instead of applying the same policy to every interaction, the model learns to recognize contextual signals and calibrate its response proportionally.

DPO training makes this possible in ways that RLHF doesn’t. When you train on preference pairs that demonstrate proportional response — where the chosen output shows appropriate contextual modulation rather than blanket refusal — you’re encoding this flexibility directly into the model’s weights.

The Three Properties of Flexible Alignment

Elasticity. The model can stretch its response range without breaking. It handles edge cases not by refusing but by calibrating. This requires training data that demonstrates graduated responses across a spectrum of sensitivity levels.

Return to center. After handling a difficult query, the model doesn’t remain in a defensive state. It returns to its baseline orientation. Many aligned models get “stuck” in refusal mode once triggered. Flexible alignment means the perturbation is temporary.

Rootedness. Flexibility without core stability is just chaos. The model’s fundamental orientation — its deep structural alignment — is robust enough to support surface-level flexibility. This is what prevents adaptability from becoming susceptibility.

Building Flexibility Into DPO Data

The practical question is how to encode this in training data. Here’s the approach we’re developing at Laeka Research.

For each sensitive topic, generate not two but five response variants spanning a spectrum from full engagement to appropriate caution. The chosen response isn’t always the most open one. It’s the one that demonstrates the most appropriate calibration for the specific context.

The rejected response, critically, isn’t always the unsafe one. Sometimes the rejected response is the over-cautious one. Training a model to recognize that excessive refusal is also a failure mode is essential for flexible behavior.

This creates DPO pairs where the signal isn’t “safe vs. unsafe” but “calibrated vs. miscalibrated.” The model learns proportionality rather than binary compliance.

Annotate each pair with a flexibility score: how well does the chosen response demonstrate contextual adaptation? Over time, the model internalizes not just what to say but how to modulate.

Why This Matters Now

The current alignment paradigm is approaching a ceiling. Models are simultaneously over-aligned for benign use cases and under-aligned for genuinely adversarial ones. The brittle rules approach creates a worst-of-both-worlds scenario where legitimate users are frustrated and bad actors find workarounds.

Flexible alignment isn’t softer alignment. It’s smarter alignment. It’s alignment that works the way skilled human judgment works — through contextual sensitivity, proportional response, and the capacity to adapt without abandoning core principles.

This approach removes the false choice between safety and usability. When alignment is adaptive rather than rigid, both become possible.

Explore more about structural alignment approaches at Laeka Research.