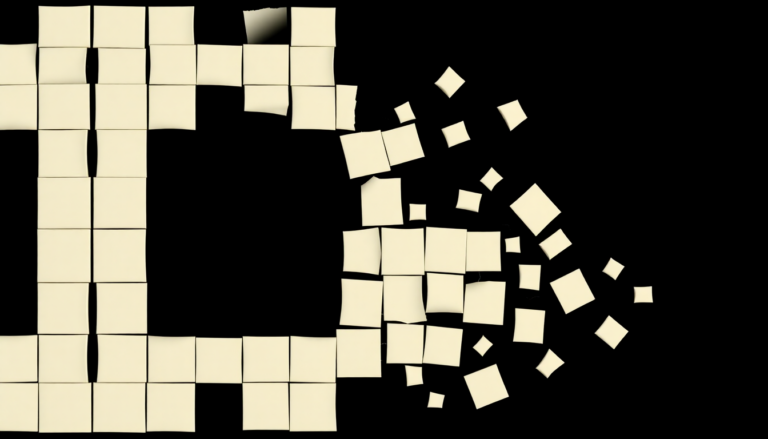

Binary Thinking as Computational Overhead: Why Fewer Categories Means Better Outputs

Binary thinking forces complex situations into simple choices, discarding information. That discarded information has a cost. In computational terms, binary thinking is overhead. This applies to AI systems. It applies to human organizations. It…