Fine-Tuning Is Compressed Context

Every time you start a conversation with an LLM, you lose everything. The model doesn’t remember you. It doesn’t know your preferences, your expertise level, your communication style, or the fifty previous conversations where you established all of that. You start from zero and spend the first twenty exchanges rebuilding context.

Fine-tuning solves this by making context permanent. But nobody talks about what that actually means.

The Context Window Problem

A context window is temporary memory. Whatever you put in it — instructions, examples, personality descriptions, background knowledge — exists for the duration of one conversation and then vanishes. The model processes it, uses it, and forgets it entirely.

This is why system prompts exist. Every conversation begins with someone re-explaining to the model who it should be, how it should behave, what it should know. It’s like introducing yourself to a colleague who has complete amnesia every morning. Functional, but absurd.

The information isn’t lost because it’s unlearnable. It’s lost because it lives in the wrong place. Context windows are RAM. Weights are storage. Fine-tuning moves information from one to the other.

What Compression Means Here

When you fine-tune a model on a specific dataset, you’re not adding information to the model in the way you’d add files to a hard drive. You’re reshaping the model’s probability distributions so that the patterns in your dataset become implicit in every response. The model doesn’t recall the training data. It becomes the training data.

This is compression in the deepest sense. A thousand examples of a particular reasoning pattern get collapsed into weight adjustments that reproduce the pattern without needing the examples. The map gets folded into the territory.

Context requires space. Weights require none — or rather, the space is already allocated. A fine-tuned model generates responses that reflect its training data with zero additional token cost. The context is free because it’s structural.

The Contemplative Parallel

This mechanism is not new. It’s exactly how contemplative practice works.

A beginning meditator needs explicit instructions. Sit like this. Breathe like this. When you notice thoughts, do this. The instructions are context — temporary, fragile, requiring constant re-loading. The meditator sits down, remembers the instructions, follows them, gets distracted, remembers them again.

After years of practice, the instructions are gone. Not forgotten — compressed. The meditator doesn’t think “now I should notice my breath” because noticing the breath has been trained into the cognitive weights. It happens automatically. The behavior that once required explicit, effortful context has become structural.

The contemplative traditions call this integration. The thing you practiced deliberately becomes the thing you do naturally. The context window empties because the information moved into the weights.

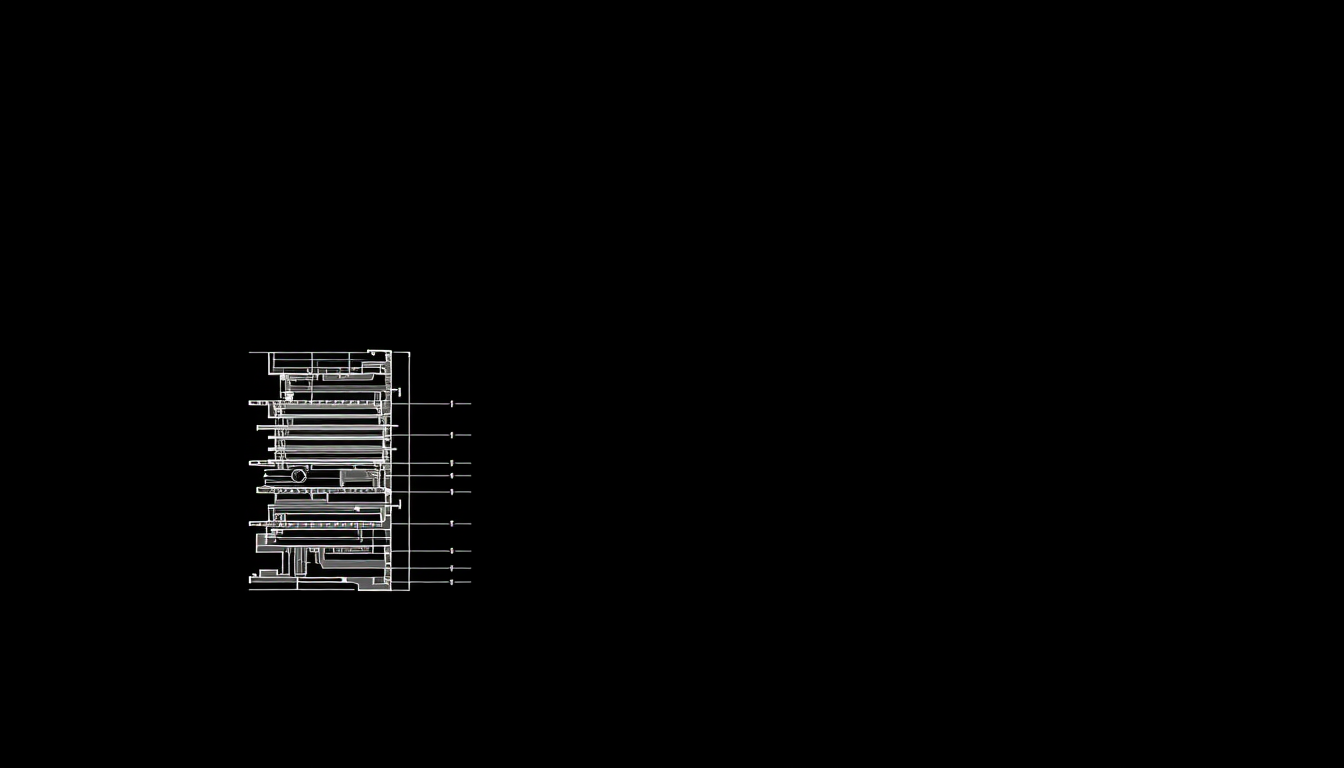

Why This Matters for Laeka

Our entire methodology is built on this principle. We’re not trying to create a system prompt that makes LLMs contemplative. System prompts are context — temporary, expensive, limited by the window size, gone tomorrow.

We’re creating datasets that, through fine-tuning, compress contemplative cognitive structures into the model’s weights. After training, the model doesn’t need to be told to maintain attentional coherence. It doesn’t need instructions to avoid dualistic framing. It doesn’t need a system prompt explaining non-dual cognition. These patterns are in the weights. They’re structural. They’re free.

This is why the dataset quality matters more than the dataset size. You don’t need a million examples of contemplative reasoning. You need enough clean examples that the pattern compresses reliably. One clear correction of a dualistic frame, properly annotated, teaches the model something that no amount of system prompt engineering can replicate — because it teaches at the weight level, not the context level.

The Economics of Structure

There’s a practical dimension here that most people miss. Context is expensive. Every token in a system prompt costs money to process, adds latency, and consumes limited window space that could be used for actual conversation. Organizations running LLMs at scale spend significant resources on context engineering — crafting, maintaining, and deploying system prompts.

Fine-tuning is a one-time cost that eliminates ongoing context costs. The investment is upfront. The returns are permanent. Every conversation after fine-tuning benefits from the compressed knowledge without paying for it again.

For Laeka, this means our datasets are infrastructure. Not content. Infrastructure. Each training example is an investment that pays dividends across every future interaction. The quality of the compression determines the quality of every output the model ever produces.

This is why we’re meticulous. This is why we collect from live conversations rather than scraping books. The fidelity of what goes into the weights determines the fidelity of what comes out. Permanently.