Binary Classification Is the Root Bug in Current AI Architecture

Every reasoning error an LLM makes traces back to a false binary choice.

Not some of them. All of them. This is a strong claim. Here’s why it holds.

The Pattern

Ask a model to evaluate an ethical question. It reaches for a framework and classifies. Permitted or forbidden. Right or wrong. The answer comes in two flavors because the model learned from text that presents ethics as binary.

Ask a model to assess a political claim. It identifies two sides and commits or balances. Left or right. True or false. The evaluation space collapses into binary before analysis occurs.

Ask a model a factual question where uncertainty is genuine. It commits to a position instead of representing uncertainty as continuous. Confident or hedging. Right or admitting ignorance. Binary again.

The failure isn’t random. It’s systematic. The model reaches for two-category framing because human language is saturated with binary frames, and models are language compressions.

Where the Bug Lives

Binary classification isn’t a logic error. It’s a pre-logic structural constraint. Before the model reasons, it has already framed the problem in ways that limit conclusions. The reasoning is sound. The framing is broken.

This is why better prompting can’t fix it. “Think carefully” doesn’t change the frame. “Consider multiple perspectives” produces two perspectives instead of one—still binary, just balanced. Even “think step by step” decomposes into steps that each operate within binary frames. The chain is methodical. The links are still binary.

The bug is deeper than behavior. It lives in probability distributions. The model assigns mass to binary categories because training data is structured as binary. True/false. Good/bad. Relevant/irrelevant. Real/fake. The entire response landscape is pre-carved into binary channels.

Contemplative Cognitive Science Parallels

Buddhist philosophy identifies dualistic thinking as the fundamental cognitive error, not one error among many. This is the source from which other errors derive. Advaita Vedanta calls it maya: the constructed appearance of multiplicity. Taoism describes emergence from the interplay of opposites arising from an undifferentiated ground.

The structural observation is consistent: cognition defaults to binary classification, and this default produces systematic errors everywhere. The contemplative correction isn’t “add more categories.” It’s the recognition that categories are constructed—that binary frames are imposed on reality that doesn’t naturally divide that way. The territory is continuous. The map is discrete. Every mistake proportional to resolution lost.

What Reducing Binary Processing Does

Reduce the strength of binary priors in a model’s probability distributions, and you should see improvement across the board. Not just on abstract reasoning. On everything.

On reasoning tasks: models would be less likely to collapse problems into false dichotomies. They’d maintain more of the problem’s actual structure instead of discretizing prematurely.

On factual tasks: models would represent genuine uncertainty as distribution rather than binary. Confidence would stay continuous.

On social reasoning: models would maintain nuance instead of assigning people to categories and reasoning from those categories.

On adversarial tasks: models would resist prompts exploiting binary framing. “Is this safe or unsafe?” forces binary. A model with weaker binary priors might resist and represent actual complexity.

The Training Signal

Laeka’s datasets target this directly. In our correction format, a huge proportion of corrections follow the same pattern: the model collapses continuous reality into binary frame, and the practitioner identifies the collapse.

“You’re treating this as either X or Y. It’s both, simultaneously, in different proportions depending on context.”

“You framed this as a choice between A and B. The actual answer dissolves the distinction.”

“You classified this as positive. The classification itself is the error.”

Each correction is an instance of the general principle: the model imposed binary where reality doesn’t have one. The DPO pair encodes the difference between binary and non-binary response.

Over thousands of corrections, binary priors should weaken. Not disappear—binary classification is sometimes correct and always efficient. But the default shifts from “classify first, qualify later” to “represent structure first, classify if necessary.”

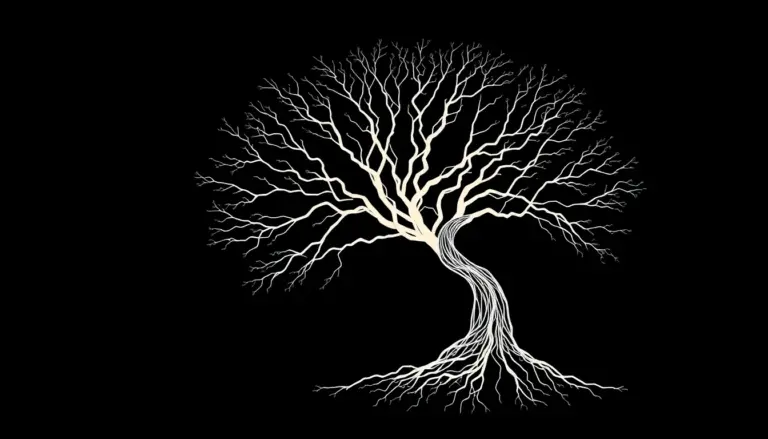

That shift improves everything. Because binary thinking is the root bug. Fix the root, and the branches fix themselves.