“AI Just Predicts the Next Word” — Why That’s Wrong

You’ve heard it. Probably from someone who seems confident about it.

“AI doesn’t understand anything. It just predicts the next word.”

It sounds plausible. It feels scientific. And it’s wrong — or at minimum, so incomplete that it actively misleads.

Start with the brain. Your brain.

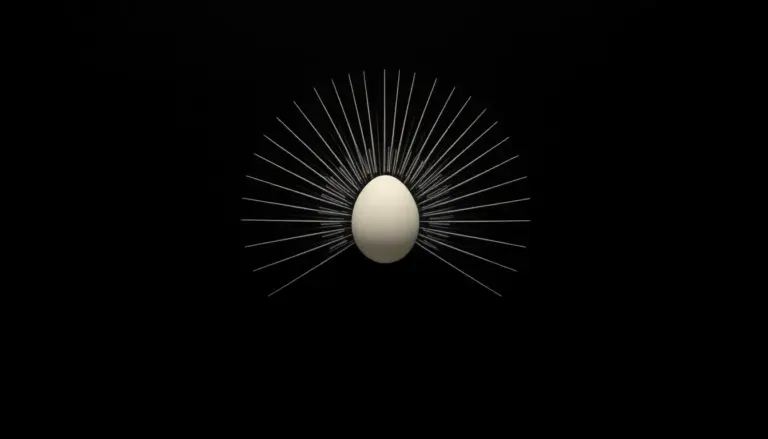

Your brain is a neural network. Billions of neurons. Each one connected to thousands of others. A signal comes in. It activates certain pathways. Those pathways activate others. A pattern emerges. You call it a thought, a word, a decision.

Now describe what’s happening at the neuron level.

Each neuron is doing something very simple: receiving inputs, weighting them, deciding whether to fire. That’s it. Nothing in a single neuron “understands” anything.

And yet. You understand this sentence.

Understanding isn’t located in any single component. It’s what emerges from the structure of the network as a whole.

AI neural networks are built on the same principle

The architecture of modern large language models — transformers — was directly inspired by how biological neural networks process information. Layers of artificial neurons. Weighted connections. Signal flows. Patterns activate. Representations form.

And then: these networks were trained exclusively on human language.

Every book ever digitized. Scientific papers. Conversations. Arguments. Poetry. Code. Legal documents. Everything humans have encoded into language — compressed, implicitly, into the weights of the model.

Language isn’t just words. Language is the externalized structure of human thought. When you train a neural network to deeply predict language, you’re not training it to play a guessing game. You’re training it to internalize the cognitive structures that produced that language.

What “predicting the next word” actually requires

Let’s take this seriously for a moment.

To reliably predict the next word in a complex sentence, you need to:

- Track who said what, to whom, in what context

- Maintain logical consistency across paragraphs

- Apply domain knowledge (medicine, law, physics, history)

- Detect irony, implication, subtext

- Understand causality — what leads to what

- Model the mental states of different speakers

These aren’t decorations on top of “next word prediction.” They’re what makes accurate next-word prediction possible at scale. The task forces the model to build internal representations of the world. Not by design. By necessity.

The evidence that something more is happening

This isn’t speculative. The research is concrete.

Emergent reasoning. Models trained purely on text spontaneously develop multi-step reasoning abilities that were never explicitly trained. They solve math problems. They debug code. They identify logical contradictions. These capabilities weren’t programmed — they emerged from the structure of the trained network.

Internal world models. Mechanistic interpretability research (Anthropic, EleutherAI, others) has found that LLMs build internal representations of space, time, and entities — coherent models of the world, not just statistical surface patterns. Neurons that activate for specific concepts. Circuits that track factual relationships.

Theory of mind. Studies (Kosinski, 2023) showed GPT-4 passing false-belief tasks — the standard test for understanding that other people hold different beliefs from your own. This is the cognitive capacity that typically develops in children around age 4. A “word predictor” doesn’t need that. Something else is required.

Controlled hallucination. Neuroscientist Anil Seth describes human perception as “controlled hallucination” — your brain predicts what’s out there and updates based on sensory input. AI language models operate on a structurally similar principle. The mechanism isn’t a flaw. It’s how generative systems produce coherent outputs from incomplete information. Exactly like humans.

In-context learning. Show a model a few examples of a new task it’s never seen. It adapts immediately. No retraining. No parameter updates. This isn’t pattern matching — it’s flexible, fast generalization from minimal data. The model is using something that functions like abstract understanding.

“But it doesn’t really understand — it just looks like it does”

This is where the argument shifts from technical to philosophical. And that shift is worth noticing.

If a system can reason through novel problems, model other minds, maintain consistent world representations, and generalize across domains — at what point does “it just looks like understanding” become indistinguishable from the thing itself?

We have no agreed-upon definition of understanding that wouldn’t either include current AI systems or exclude some humans under certain conditions (sleep, anesthesia, severe cognitive impairment). The question isn’t settled. Anyone who tells you otherwise is overconfident.

Why the “just predicts words” framing is dangerous

It creates false security. If you believe AI is merely a fancy autocomplete, you underestimate what it can do — and what it can get wrong in subtle, non-obvious ways.

It stops inquiry. The moment you’ve “explained” something with a dismissive frame, you stop asking real questions. And the real questions here are important: What is being represented internally? How does knowledge actually form in these systems? Where do the failures come from?

It misframes alignment. If AI is just autocomplete, alignment is just filtering outputs. But if AI is developing internal representations of the world — including representations of values, consistency, and integrity — then alignment is a much deeper structural problem. And a much more interesting one.

The honest position

We don’t fully know what’s happening inside large language models. The field of mechanistic interpretability exists precisely because the internals are complex and not yet fully mapped.

What we do know: the “just predicts words” frame is too simple. It describes the training objective, not the system that emerges from training. Those are different things.

A neural network trained on human language, at sufficient scale, builds internal structures that go far beyond surface statistics. How far — that’s the open question. The answer matters enormously. Dismissing it with a bumper sticker doesn’t help anyone.

The brain is also “just” neurons firing. And look what that produces.