Running a 30B Model on Consumer Hardware: A Practical Guide

Running a 30 billion parameter model on a gaming PC used to be a pipe dream. Now it’s routine. The techniques that made this possible—quantization, memory optimization, and efficient inference—are transforming what’s accessible to individual researchers and small teams.

This isn’t theoretical. You can do this today with hardware you might already own.

Understanding Quantization

A 30B model in full precision requires roughly 120GB of VRAM. No consumer GPU has that. Quantization solves this by reducing the numerical precision of weights and activations.

The key quantization formats for consumer hardware are GPTQ, GGUF, and AWQ. Each makes different tradeoffs between quality and speed.

GPTQ uses 4-bit quantization with a clever per-channel scaling approach. It’s fast and produces high-quality outputs. The downside: requires significant computational overhead during inference setup.

GGUF is a universal quantization format optimized for inference. It works across different hardware and is particularly efficient for CPU-based inference with GPU acceleration.

AWQ (Activation-aware Weight Quantization) is newer and often produces better results than GPTQ at the same bitwidth by focusing on preserving activation information.

Hardware Requirements for 30B Models

A 30B model quantized to 4-bit typically requires 15-20GB of VRAM depending on context length and quantization approach. An RTX 4090 or RTX 3090 can handle this comfortably. A modern RTX 4070 Super can run it with moderate context lengths.

For budget builds, multiple smaller GPUs can be combined. Even 16GB of consumer-grade VRAM with smart memory management (using system RAM for offloading) can work.

CPU inference is viable with GGUF quantization, though it’s slower. A modern 16-core CPU with 64GB RAM can run a 30B model in 4-bit GGUF format, generating tokens at usable speeds for non-interactive tasks.

Memory Management in Practice

The challenge isn’t just VRAM capacity. It’s managing the KV cache—the key-value pairs accumulated during generation that grow with sequence length.

Techniques like paged attention (used by vLLM) reduce KV cache overhead by 60-80%. Batching multiple requests together improves throughput. Context caching stores computed token embeddings to avoid recomputation.

These optimizations are no longer theoretical exercises. They’re built into inference frameworks.

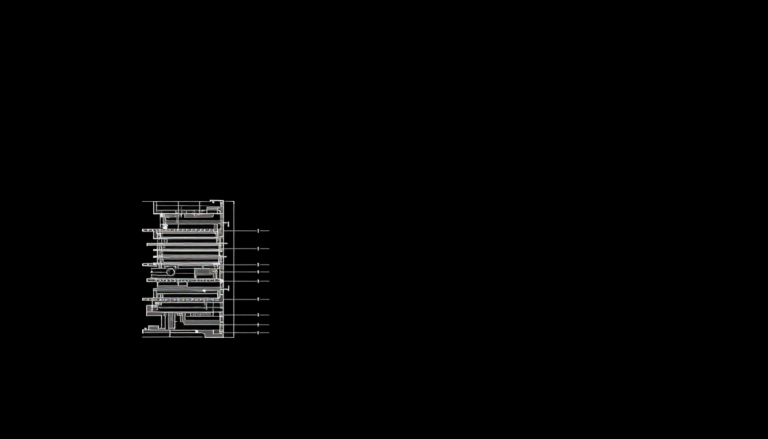

Practical Setup: The Toolchain

llama.cpp is the go-to for local CPU+GPU inference with GGUF models. It’s simple, effective, and requires almost no setup. Download a quantized model, run the binary, done.

vLLM is the standard for higher-throughput scenarios. It handles batching, paged attention, and multiple GPU setups. More powerful but requires more configuration.

ollama sits between them—user-friendly like llama.cpp but with better batching support and a nicer interface. Growing the fastest in terms of adoption.

For fine-tuning on consumer hardware, combine llama.cpp or vLLM with quantization-aware training using tools like Unsloth with QLoRA.

The Feasibility Threshold

Three years ago, running a 30B model required serious hardware investment. Today, it requires modest hardware and free software. The barrier isn’t cost anymore. It’s knowledge.

Learning quantization, memory optimization, and batching strategies takes time. But the payoff is massive: models that were locked behind API walls are now running on your laptop.

Laeka Research — laeka.org