Human-AI Symbiosis: Beyond Tool Use to True Partnership

You don’t use a partner. You work with one.

We’ve been thinking about AI wrong. The default metaphor is tools—calculators, search engines, productivity boosters. Tools serve. You command them, they obey. The relationship is one-directional: human agency, machine execution.

But the best interactions with modern AI systems don’t follow this pattern. They’re dialogical. Iterative. They require that both parties bring something the other doesn’t have. When that happens, something genuinely new emerges. That’s symbiosis.

The Partnership Asymmetry

In a true partnership, each party is better off together than separate, and each brings irreducible value. You don’t need to be equal to be partners—a guide and a climber aren’t equal, but they’re in partnership toward the summit.

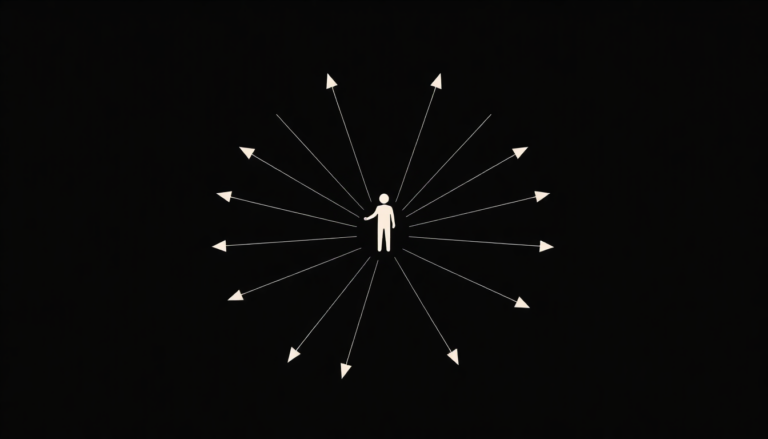

AI brings: pattern recognition at inhuman scale, tireless iteration, the ability to hold contradictions in suspension, no ego investment in outcomes, zero fatigue in the 10,000th revision.

Humans bring: judgment about what matters, the ability to know when patterns are true versus when they’re artifacts, aesthetic intuition, the capacity to ask the right question instead of answering the wrong one well, understanding of context that no token embedding captures.

Neither is sufficient alone. The tool metaphor breaks here because you can’t command a system into doing what only partnership can do.

What Partnership Demands

This shift in stance changes everything about how you interact with AI. You stop optimizing for the perfect prompt. You start thinking about what you actually need to explore, and whether the system can help you think about it better.

Symbiosis requires honesty. You have to actually tell the AI what you’re confused about, not dress it up as a finished question. You have to listen to what it offers back, even when it challenges your initial framing. You have to know when it’s hallucinating and when it’s seeing something you missed.

It requires mutual refinement. The AI isn’t right because it’s AI. It’s useful because you know how to ask it, and because you know which of its outputs actually matter. That knowledge has to get sharper through the collaboration.

The Cognitive Load Inversion

In tool-use, you manage the interface. You optimize your thought for the machine’s constraints.

In partnership, you do the thinking. The AI handles the iteration. You focus on judgment; it focuses on generation. You set direction; it explores the neighborhood.

This is why people who are best at working with AI often aren’t the ones who write the most careful prompts. They’re the ones who know what they’re trying to understand, ask clearly, and then actually engage with what comes back. The technical move is secondary. The thinking is primary.

The Real Risk

The trap isn’t that AI will replace human thinking. It’s that we’ll optimize for surface-level tool use and miss the possibility of genuine partnership. We’ll use these systems to make what we were already making, faster. We’ll miss the chances to think thoughts that neither we nor the system could have thought alone.

Partnership is harder than tool use. It requires vulnerability, attention, and the willingness to be wrong. But it’s the only mode where AI genuinely expands human capability instead of just accelerating existing trajectories.

Laeka Research — laeka.org