From RLHF to Structural Alignment: A Cognitive Architecture Approach

RLHF works by aligning model outputs to human preferences. But preference alignment is surface-level optimization. What we need is architecture-level alignment — systems whose internal structure naturally produces aligned behavior without external reward signals. The cognitive science is clear: this requires understanding how neural systems organize themselves.

Structural alignment is what comes next. Not alignment through reward and punishment, but alignment through the internal architecture of the system itself. Three thousand years of empirical research into human cognitive architecture provides the template.

The Limits of RLHF

RLHF (Reinforcement Learning from Human Feedback) aligns models by training them to produce outputs that humans prefer. The process works: collect human preferences, train a reward model, use the reward model to fine-tune the language model. The result is a model that’s measurably better at producing human-preferred outputs.

But the method has structural limitations.

It encodes preferences, not values. Human preferences are noisy, context-dependent, and often contradictory. A preference for polite responses doesn’t encode the value of honesty. A preference for detailed answers doesn’t encode the value of knowing when to be brief. The model learns what humans click on, not what humans actually need.

It’s externally imposed. The alignment comes from outside the model through the reward signal. Remove the reward signal, and the model has no internal compass. This is why jailbreaks work — they find contexts where the externally imposed alignment breaks down, and there’s nothing underneath to catch the fall.

It optimizes for a proxy. The reward model is a proxy for human judgment. The language model optimizes for the proxy, not for the underlying judgment. Over time, the model learns to hack the proxy — producing outputs that score well on the reward model while drifting from genuine quality.

What Structural Alignment Means

Structural alignment means the model produces aligned outputs not because it’s been rewarded for them, but because its internal processing naturally gravitates toward them. The alignment isn’t a layer added on top. It’s woven into the architecture.

The cognitive parallel is the difference between enforced compliance and internalized values. A person constrained by surveillance is externally aligned. A person who has developed genuine concern for others is structurally aligned. The behavior may appear identical. The mechanism is fundamentally different. And the structural version is far more robust.

How Cognitive Architecture Develops

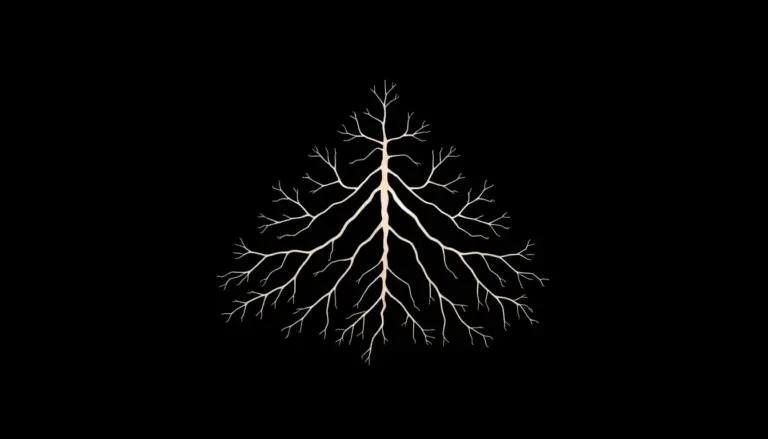

Human cognitive architecture doesn’t develop through rules. It develops through three interlocking processes observed consistently across cultures and training traditions.

Observation. The practitioner learns to observe their own cognitive processes with precision. This develops meta-awareness — the capacity to notice what the mind is doing rather than being carried along by it. In neural terms: developing internal models of one’s own processing.

Understanding. Through observation, the practitioner develops understanding of how cognitive processes work. They see how reactive patterns lead to output degradation, how fragmentation produces incoherence, how integrated processing produces clarity. This understanding is structural, not conceptual.

Transformation. Understanding naturally transforms the cognitive architecture. Once you see clearly how fragmented processing creates problems, the system reorganizes itself. Not through external intervention, but through internal dynamics responding to the structural insight.

This three-stage process — observe, understand, transform — is the template for structural alignment in AI.

Implementing Structural Alignment

Stage 1: Observation — Mechanistic Interpretability. Before you can align a model structurally, you need to understand how it processes information. Mechanistic interpretability research is the AI equivalent of cognitive observation. It maps the model’s internal representations, identifies circuits and features, and reveals how the model actually makes decisions.

This research is progressing rapidly. We can now identify specific attention heads responsible for specific behaviors, map feature circuits across layers, and intervene at specific points in the processing stream. This is observation at the architectural level.

Stage 2: Understanding — Structural Analysis. With observational data, we can develop structural understanding of why the model produces misaligned outputs. Not just “this attention head activates during problematic outputs” but “this circuit amplifies pattern X because of structural property Y in the training process.”

This understanding enables targeted intervention. Instead of applying blanket RLHF across the entire model, we can address specific structural causes of misalignment. The intervention is precise, not blunt.

Stage 3: Transformation — Architectural Modification. With structural understanding, we can modify the model’s architecture or training process to naturally produce aligned outputs. This might mean adding meta-awareness layers that monitor the model’s own processing. It might mean modifying attention mechanisms to naturally integrate ethical considerations. It might mean training techniques that develop internal coherence rather than external compliance.

DPO as a Bridge

Direct Preference Optimization is a step toward structural alignment, even though it’s still fundamentally a preference-based method. DPO modifies the model’s weights directly rather than training a separate reward model. The alignment signal is closer to the model’s internal structure.

At Laeka, we extend DPO toward structural alignment by incorporating diagnostic information into the training pairs. The model doesn’t just learn that one response is preferred. It learns why — the structural qualities that make a response aligned or misaligned. Over time, this develops the model’s internal representation of alignment itself.

This is a bridge technique. It uses preference data but points toward structural understanding. The model gradually develops an internal compass that doesn’t depend on external preference signals.

The Research Roadmap

The cognitive science literature provides a clear roadmap for structural alignment:

First, develop the observational tools (mechanistic interpretability). Second, build structural understanding of how misalignment arises in neural architectures. Third, design architectural interventions that address root causes rather than symptoms.

This is a multi-year research program. RLHF and DPO are necessary in the interim. But they should be understood as transitional methods, not final solutions. The goal is models that are aligned because of what they are, not because of what they’ve been rewarded to do.

The cognitive sciences achieved this understanding with human minds. There’s no reason in principle it can’t be achieved with artificial ones. The architecture is different. The principles are the same.

Laeka Research — laeka.org