ChatGPT and Client Confidentiality: Why Your Firm Is at Risk

You’ve probably already done it. Pasted a contract excerpt into ChatGPT for a quick summary. Asked for clause analysis. Typed a legal question including case details. It’s convenient, it’s fast, and it’s potentially catastrophic for your firm.

The problem nobody wants to see

When you enter information into ChatGPT, that data travels through U.S. servers. OpenAI uses it — unless you’ve explicitly configured otherwise — to train its models. Confidential case details could end up, in some form, in responses given to other users.

For a professional bound by attorney-client privilege, that’s a serious breach. Quebec’s Bar Association has issued clear guidelines: using generative AI tools with client data must meet the same confidentiality standards as any other technology.

Bill 25 adds another layer of risk

Since September 2023, Bill 25 on personal information protection imposes strict obligations on Quebec organizations. Transferring personal information outside Quebec requires a privacy impact assessment. Penalties can reach 25 million dollars or 4% of global revenue.

Each time a lawyer at your firm pastes client information into ChatGPT, they’re potentially making a cross-border data transfer without the legal safeguards required by law.

The real risks

Professional conduct risk. The Code of Professional Conduct requires maintaining attorney-client privilege. A breach can trigger disciplinary action, even disbarment.

Legal risk. A client discovering their confidential data was shared with an American AI tool could sue for professional malpractice.

Reputational risk. In a market where trust is currency, a data leak — even accidental — can destroy years of reputation.

Financial risk. Bill 25 fines, potential lawsuits, and lost clients can add up fast.

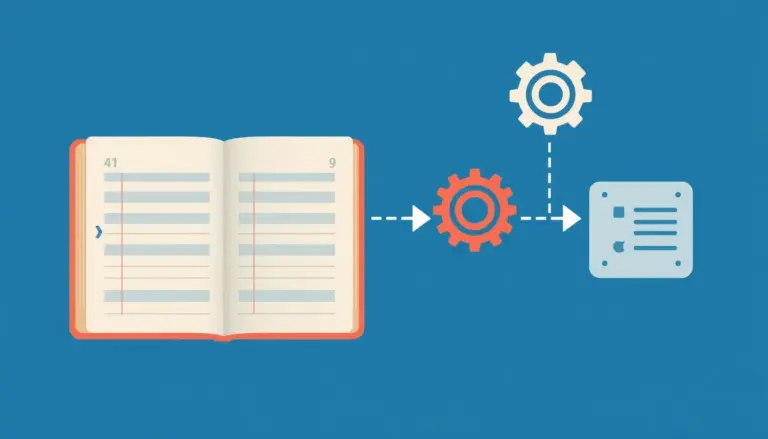

The solution: private, sovereign AI

The good news is you can harness AI’s power without compromising confidentiality. The answer: AI systems deployed on Canadian servers, dedicated to your firm, with zero data sharing with third parties.

A private RAG system runs in an isolated environment. Your data never leaves Canada. It’s never used to train third-party models. You retain full control over who accesses what.

Steps to take right now

While rolling out a private AI solution, implement these immediate measures. Establish a clear AI usage policy banning client data sharing with free tools. Train your staff — lawyers, paralegals, students — on the risks. Document your compliance so you can demonstrate due diligence if audited.

Move to AI safely

At Laeka, we deploy AI solutions hosted exclusively in Canada, compliant with Bill 25 and Bar Association requirements. Our systems are designed for attorney-client privilege from the ground up — not added afterward.

Book your 30-minute discovery call to assess your current risk level and explore safe alternatives. → laeka.org/services