Can AI Think? The Real Answer Might Surprise You.

“Can AI think?” That’s THE question everyone asks when the topic comes up at dinner. And the answer you’ll get depends on who you’re talking to.

The engineer will say no. The philosopher will say “it depends on your definition of thinking.” Your brother-in-law will say “of course, look, it wrote me a poem!”

The real answer? The question is poorly framed. And that’s what makes it interesting.

What does “thinking” even mean?

Before asking if AI thinks, we’d need to agree on what “thinking” means. And that’s where it gets complicated.

When you think about what to have for dinner, that’s thinking. When you solve a math problem, that’s thinking. When you wonder if your ex is doing okay, that’s thinking. But these three things are completely different. The word “thinking” is a catch-all.

AI can do some of these things. It can solve math problems. It can generate dinner options. But it doesn’t “wonder” if your ex is okay. It has no emotional experience. It has no inner life.

What AI does really well

AI is the world champion of a very specific type of “thinking”: pattern processing. Give it data and it’ll find regularities you’d never have spotted.

A radiologist looks at a scan and sees a suspicious spot. AI looks at the same scan and compares it with 500,000 scans it’s already analyzed. It can detect cancers that the human eye misses. That’s impressive. But is it “thinking”?

It’s like asking if your calculator “understands” math. It gives the right answers without understanding anything. AI is the same, but at such a massive scale that it looks like understanding.

The philosophical zombie test

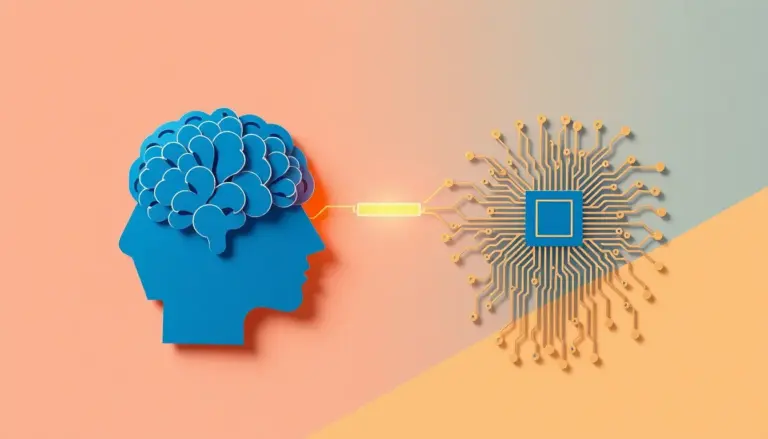

Philosophers have a useful concept here: the philosophical zombie. Imagine someone who acts exactly like a human — they laugh, they cry, they tell you they’re in pain — but they feel nothing inside. Zero subjective experience. From the outside, you can’t tell the difference.

ChatGPT is a philosophical zombie. It produces text that has all the characteristics of human thought. It argues, it nuances, it cracks jokes. But inside? There’s nothing. It’s mathematical calculations on word probabilities.

And here’s the twist: it doesn’t change how useful it is. Whether AI “thinks” or not, it can still help you write your resume, understand your taxes, or plan your trip. The question of thought is philosophically fascinating, but practically, what matters is: does it work?

Why the question still matters

Even though it’s a poorly framed question, it reveals something important: we project our humanity onto our tools. We’ve always done it. We name our cars. We talk to our plants. And now, we think ChatGPT “understands” us.

That’s fine as long as we stay aware of what we’re doing. The danger is when we forget it’s a tool. When we trust it like we’d trust a friend. When we hand it important decisions without checking.

AI doesn’t think. But it’s still incredibly useful. And understanding that distinction is the foundation of everything.

At Laeka Research, we study exactly this grey zone between what AI does and what we think it does. And with Sherpa, we help you navigate that zone without getting lost. Free, made here.